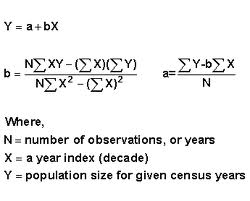

Similarly, students who unluckily score less than their ability on the first test will tend to see their scores increase on the second test. Therefore, a student who was lucky and over-performed their ability on the first test is more likely to have a worse score on the second test than a better score. Some of the lucky students on the first test will be lucky again on the second test, but more of them will have (for them) average or below average scores. For the first test, some will be lucky, and score more than their ability, and some will be unlucky and score less than their ability. The phenomenon occurs because student scores are determined in part by underlying ability and in part by chance. It has frequently been observed that the worst performers on the first day will tend to improve their scores on the second day, and the best performers on the first day will tend to do worse on the second day. A class of students takes two editions of the same test on two successive days. The following is an example of this second kind of regression toward the mean. Hence, those who did well previously are unlikely to do quite as well in the second test even if the original cannot be replicated. On a retest of this subset, the unskilled will be unlikely to repeat their lucky break, while the skilled will have a second chance to have bad luck. In this case, the subset of students scoring above average would be composed of those who were skilled and had not especially bad luck, together with those who were unskilled, but were extremely lucky. Most realistic situations fall between these two extremes: for example, one might consider exam scores as a combination of skill and luck. if there were no luck (good or bad) or random guessing involved in the answers supplied by the students – then all students would be expected to score the same on the second test as they scored on the original test, and there would be no regression toward the mean. If choosing answers to the test questions was not random – i.e. No matter what a student scores on the original test, the best prediction of their score on the second test is 50. Thus the mean of these students would "regress" all the way back to the mean of all students who took the original test. If one selects only the top scoring 10% of the students and gives them a second test on which they again choose randomly on all items, the mean score would again be expected to be close to 50. Naturally, some students will score substantially above 50 and some substantially below 50 just by chance. Then, each student's score would be a realization of one of a set of independent and identically distributed random variables, with an expected mean of 50. Suppose that all students choose randomly on all questions. Conceptual examples Simple example: students taking a test Ĭonsider a class of students taking a 100-item true/false test on a subject. Regression toward the mean is thus a useful concept to consider when designing any scientific experiment, data analysis, or test, which intentionally selects the "most extreme" events - it indicates that follow-up checks may be useful in order to avoid jumping to false conclusions about these events they may be "genuine" extreme events, a completely meaningless selection due to statistical noise, or a mix of the two cases. In the first case, the "regression" effect is statistically likely to occur, but in the second case, it may occur less strongly or not at all.

Mathematically, the strength of this "regression" effect is dependent on whether or not all of the random variables are drawn from the same distribution, or if there are genuine differences in the underlying distributions for each random variable. Furthermore, when many random variables are sampled and the most extreme results are intentionally picked out, it refers to the fact that (in many cases) a second sampling of these picked-out variables will result in "less extreme" results, closer to the initial mean of all of the variables.

In statistics, regression toward the mean (also called reversion to the mean, and reversion to mediocrity) is the phenomenon where if one sample of a random variable is extreme, the next sampling of the same random variable is likely to be closer to its mean. Not to be confused with the financial concept of mean reversion.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed